- Big Data

- Data Analytics

- Cloud Solutions

- Legacy System Modernization

Organizations rely on consistent, high-quality data pipelines to support analytics and decision-making. Acropolium developed a custom API integration platform designed to enable scalable API data integration, normalize data from multiple sources, and deliver structured datasets for BI reporting.

client

NDA

Netherlands

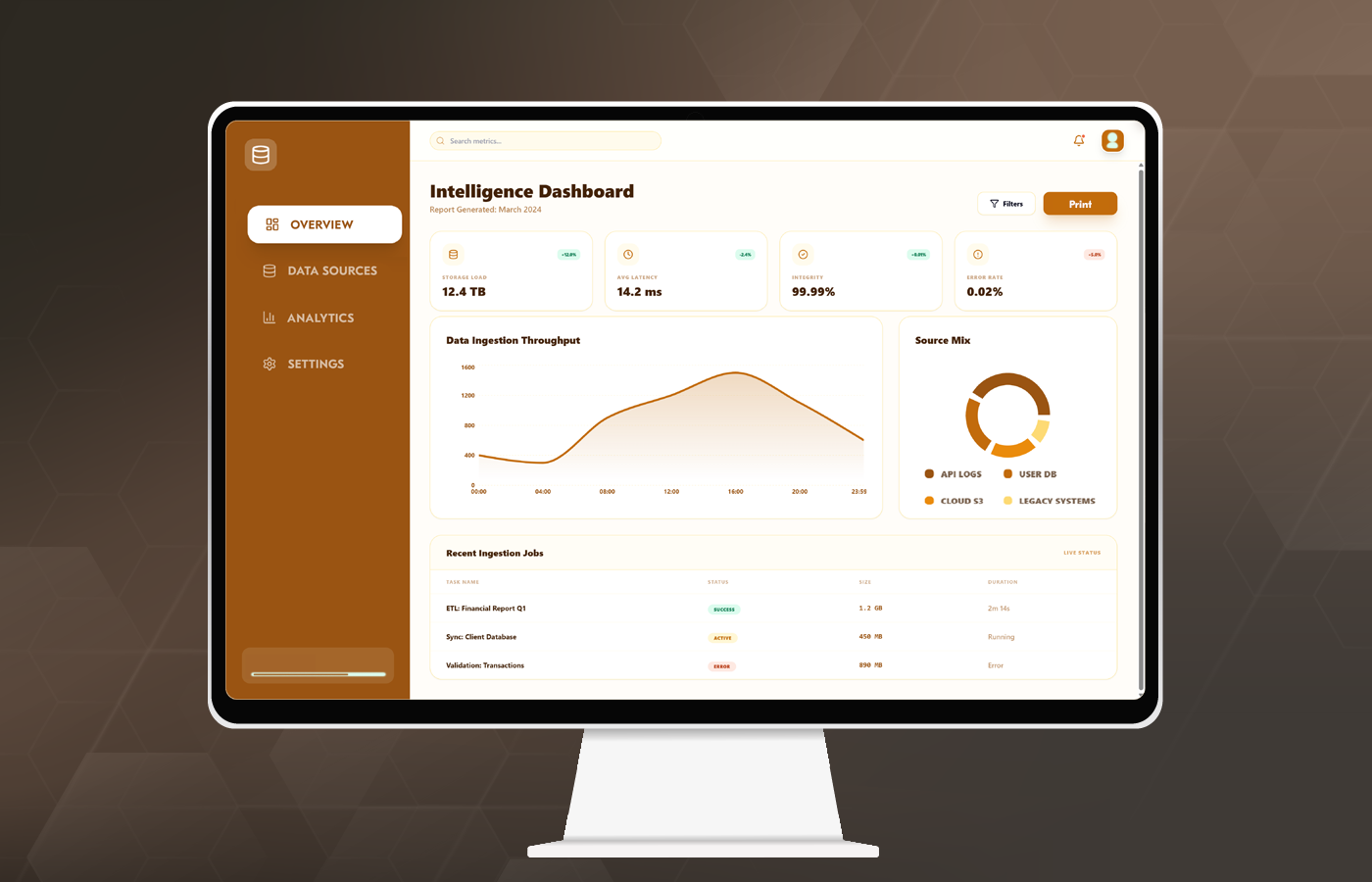

The client operates a data portal focused on collecting and processing statistical data from multiple external systems, including HR platforms and other third-party APIs. The platform aggregates incoming data, transforms it into structured formats, and delivers it to BI teams for further analysis and reporting.

The system plays a central role in enabling data integration for BI, acting as a bridge between fragmented data sources and analytical tools. It supports continuous data ingestion and transformation, so that analysts work with consistent and reliable datasets.

As data volumes and integration complexity increased, the client required a more scalable and maintainable enterprise data integration platform capable of handling growing workloads and supporting future expansion.

The platform evolved into a core enterprise data integration platform, enabling centralized control over data flows and improving governance across multiple sources. Data integration for BI remained consistent, even as the number of APIs and data formats increased. The system became a critical layer for managing structured and unstructured data across the organization.

request background

Modernizing API and data integration for BI pipelines

The client approached Acropolium with a need to modernize their existing platform to support efficient API and data integration. The system was responsible for collecting statistical data from 16 different APIs, processing large volumes of incoming data, and delivering prepared datasets to BI analysts.

A key requirement was to improve API data ingestion capabilities. The platform needed to handle continuous data inflow from multiple sources while guaranteeing performance across the entire processing pipeline.

The client also required a more structured approach to data integration using API, including standardized methods for building and maintaining integration adapters. This step was essential to reduce development time and ensure consistency across integrations.

Additionally, the system needed to support robust data pipelines for BI, enabling efficient data transformation, aggregation, and delivery. The goal was to create a scalable API data pipeline that could support increasing data volumes and more advanced analytics use cases.

challenge

Scaling API data pipelines and improving processing performance

The primary challenge was the limited performance of the existing system. Data parsing processes were slow, which affected the timeliness and reliability of datasets delivered to BI teams. For the client, it was difficult to support real-time or near-real-time analytics.

Another challenge was the lack of multithreading capabilities. The system processed data sequentially, which restricted throughput and created bottlenecks as data volumes increased. Scaling the platform required a fundamental redesign of the processing approach.

The platform also lacked sufficient monitoring and control over data processing workflows. Without visibility into execution processes, it was difficult to identify failures, track performance, or manage pipeline operations effectively.

Legacy code complexity further complicated the situation. The PHP-based implementation made maintenance and extension difficult, especially when integrating new data sources or updating existing APIs. This challenge limited the system’s ability to evolve alongside business needs.

Finally, the platform needed to support reliable API data aggregation across multiple sources while maintaining data consistency and accuracy. Achieving this required improvements in fault tolerance, processing logic, and overall system architecture.

goals

- Modernize the legacy system to support scalable API data integration

- Enable high-throughput API data ingestion across multiple sources

- Improve processing speed and reduce latency in data pipelines

- Standardize data integration using API for faster adapter development

- Ensure reliable API data aggregation and data consistency

- Strengthen monitoring and control over data processing workflows

- Enable scalable data pipelines for BI and analytics use cases

- Prepare the platform for future expansion and advanced reporting capabilities

solution

Designing a high-performance API integration platform for BI pipelines

NestJS, Redis, PostgreSQL, React.js

1 months

6 specialists

Acropolium approached the engagement as a full architectural modernization of an API integration platform, focusing on performance, scalability, and maintainability. The system was redesigned to support high-volume API and data integration workflows for reliability in data delivery to BI systems.

A new backend processing core was implemented using NestJS, enabling a structured and maintainable service architecture. The team introduced an event-driven model powered by Redis queues, which allowed asynchronous processing and significantly improved throughput for API data integration .

The platform was further optimized to support scalable API data integration pipelines for efficient handling of high-frequency data streams. By implementing structured ingestion and transformation layers, the system improved API data ingestion reliability and reduced processing delays. Our approach to development enabled the creation of a robust data pipeline for BI, where data is continuously collected and prepared for analytics. The resulting API integration platform supports consistent delivery of high-quality datasets for reporting and decision-making.

To address performance limitations, multithreaded data processing was implemented. ur team enabled parallel handling of incoming data streams and eliminated bottlenecks caused by sequential execution. As a result, the platform achieved a substantial improvement in processing speed and responsiveness.

The core data processing logic was isolated into a dedicated engine, allowing better control over transformations, validation, and custom data integration with API workflows. The separation improved maintainability and enabled easier extension of the system as new data sources were added.

The solution also introduced standardized approaches for building integration adapters. All the processes at Acropoliun reduced development time and ensured consistency across integrations, supporting scalable enterprise data integration platform capabilities.

- Designed a new backend processing core using NestJS

- Implemented event-driven architecture with Redis queues

- Introduced multithreaded data processing

- Isolated data processing into a dedicated engine

- Standardized integration adapter development approach

- Optimized API data ingestion and transformation workflows

- Implemented full unit test coverage for processing logic

- Added monitoring and control for execution processes

- Improved fault tolerance and error handling mechanisms

- Enhanced security and governance across data pipelines

outcome

High-performance and scalable data integration platform for BI

client feedback

Before modernization, our data pipelines were a constant constraint on analytics. Today, the platform processes high volumes of data without delays, and we have full control over how data flows across systems. What changed is our ability to rely on data as a stable foundation for decision-making. We now onboard new data sources faster and maintain consistency across pipelines without introducing additional complexity