Your pilot worked. The demo looked great. Then someone asked to roll it out to production – and the whole thing quietly died in a backlog somewhere.

If that sounds familiar, you’re not alone. Despite agentic AI being the biggest enterprise technology story of the past 18 months, only about 11% of companies that ran pilots in 2025 actually shipped them into production. Not because the technology doesn’t work – it does. But because most organizations ran into the same four problems, at the same four stages, and no one warned them in advance.

At Acropolium, we’ve been building AI-powered systems for enterprises across healthcare, fintech, hospitality, logistics, and other industries for over 7 years. In the past 18 months, we’ve seen more agentic AI projects stall – or fail entirely – than in any equivalent period of our company’s history. The patterns are consistent enough that we can now predict, within the first discovery conversation, where a deployment is likely to break.

This guide documents those patterns. It covers what agentic AI trends in 2026 actually mean for production deployments, which workflows are succeeding, and the architecture decisions that separate the 11% who shipped from the 89% who didn’t.

What is Agentic AI (and What Makes It Different from What You Already Have)

The simplest way to put it: a chatbot answers your question and stops. An AI agent receives a goal and keeps working until it’s done.

Concretely: your support chatbot tells a customer their refund takes 5–7 business days. An AI agent checks the order status, verifies the refund eligibility against policy, initiates the refund in the payment system, sends the confirmation email, and updates the CRM record – without a human touching any step. That’s not a smarter chatbot. That’s a fundamentally different thing.

The Perceive → Plan → Act → Observe Loop

Every production agent runs on some version of this cycle. It perceives its environment (reads data from connected systems), plans a sequence of steps toward the goal, acts using external tools (APIs, databases, code execution), observes what happened, and iterates. What enables this is tool use: the ability to call real enterprise systems and get real results back. An agent without tools is still just a text generator.

The quality of that tool layer – how reliably an agent can read from and write to your actual CRM, ERP, or custom systems – is the single biggest factor in whether a deployment works in production. We’ll come back to this.

How Agentic AI Differs from RPA and Chatbots

Here’s the version of the comparison that actually helps you decide:

| Capability | RPA | Chatbot / Gen AI | Agentic AI |

|---|---|---|---|

| Can it handle exceptions? | No – breaks | No – escalates | Yes – reasons and retries |

| Multi-step workflows? | Only if pre-scripted | No | Yes – plans dynamically |

| Works across systems? | One system at a time | No | Yes – API + tool orchestration |

| Adapts when data changes? | No – needs re-scripting | Limited | Yes – by design |

| Learns from past runs or feedback? | No | Within session only | Yes – with a memory layer |

If you’re still deciding which of the three fits your specific problem, the breakdown in AI agents vs RPA vs chatbots walks through exactly that decision.

Why 2026 is Actually a Turning Point in Agentic AI (Not Just Another Trend Year)

People have been calling the next 12 months “the year of agentic AI” since 2023. So. why does 2026 feel different?

Because three things changed in 2025 that weren’t true before. Not capability improvements – infrastructure improvements. The boring stuff that determines whether enterprise deployments live or die.

Tool protocols got standardized. The Model Context Protocol (MCP) and Google’s Agent-to-Agent (A2A) protocol – backed by 50+ companies including Microsoft and Salesforce – give AI agents a reliable way to discover and call external tools. Before these protocols, every integration was custom-built, brittle, and expensive to maintain. That’s no longer the case.

Orchestration frameworks matured. LangGraph, AutoGen, CrewAI, and IBM’s watsonx now give engineering teams stable primitives for multi-agent coordination. The effort required to get a multi-agent system from prototype to production has dropped significantly in the last 12 months.

Governance tooling exists. Finance, healthcare, and legal teams were sitting out of agentic AI because there were no audit trails, no rollback mechanisms, and no way to define who was accountable when an agent made a mistake. Those tools exist now. That’s why Gartner projects 40% of enterprise applications will embed task-specific AI agents by the end of 2026 – up from under 5% in 2025.

There’s also a data flywheel dynamic worth knowing about: the longer an agent operates on your business data, the harder it becomes for a competitor to replicate what it knows. Capgemini research shows 93% of leaders believe organizations that scale AI agents in the next 12 months will gain a competitive edge that’s genuinely difficult to reverse. The window for first-mover advantage is real – but it closes as this technology becomes table stakes.

Why Most Agentic AI Pilots Don’t Reach Production

It’s predicted that more than 40% of agentic AI projects will be canceled by the end of 2027. The MIT’s NANDA study of 300+ enterprise initiatives found 95% of enterprise AI pilots fail to deliver measurable returns. Neither of these is a technology failure. The models work. The failure is architectural and organizational.

The same four root causes appear in failed deployment after failed deployment. If you’re planning a deployment, here’s what to watch for – and why understanding the broader AI trends shaping enterprise adoption matters just as much as understanding the technology itself.

Problem #1: Your Data Isn’t Agent-Ready

70–85% of AI project failures trace back to data problems. Only 12% of enterprises say their data is of sufficient quality and accessibility for AI (according to Precisely/Drexel University). The issue isn’t volume – most enterprises have years of data. The issue is that AI agents need clean, structured, real-time accessible data with clear permission controls. They can’t reason reliably over 18-month-old PDFs, inconsistently formatted spreadsheets, and siloed databases with no API layer.

The first thing we do at Acropolium before writing a line of agent code is a data audit. Not because it’s in an onboarding checklist – but because we’ve watched this problem kill more deployments than any other factor, often weeks after a pilot that looked completely healthy. One healthcare client came to us with a failed pilot they couldn’t explain. The agent had scored well in testing. In production, it was making inconsistent decisions. The cause: three separate EHR systems with different schemas for the same patient data, none of which had been reconciled. The agent wasn’t broken – it was reasoning correctly over bad inputs.

Among the latest trends in artificial intelligence, data readiness has emerged as the single most discussed prerequisite for production deployments – and the most consistently underestimated. Watch out for: If anyone on your pilot team said “we’ll fix the data later” – that’s the problem that will kill your production deployment.

Problem #2: The Integration Layer Doesn’t Exist

The most common root cause of what the industry now calls “Stalled Pilot Syndrome” is straightforward: agents that can read data but can’t act on it.

Your AI agent worked in the demo because engineers gave it clean, controlled inputs. In production, it hits Salesforce’s undocumented API behaviors, your ERP’s rate limits, five-year-old middleware nobody fully understands, and custom field schemas that change without notice. Without a dedicated integration layer that handles authentication, schema normalization, retry logic, and error recovery – agents fail within days.

87% of IT leaders rate system interoperability as “very important” or “crucial” to successful agentic AI adoption. This is one of the most consistent technology trends across regulated and unregulated industries alike: the organizations succeeding in production aren’t the ones with the best models – they’re the ones with the most robust integration layers.

The problem we see most often isn’t a broken agent – it’s an agent that can read your systems but can’t reliably act on them. It calls the right API, gets back an error it wasn’t designed to handle, and stalls. In one fintech deployment, we traced a 34% action failure rate to a single undocumented rate limit on a legacy payments API. The agent logic was sound. The connector wasn’t. We now build the integration layer as a separate engineering workstream from the agent itself – because it’s a separate problem, and conflating the two is where timelines break.

Problem #3: One AI Agent Is Doing Too Much

A single agent handling end-to-end invoice reconciliation, exception escalation, approval routing, and supplier communication looks great in a product roadmap. In production, the prompt grows unmanageable, error rates compound across 10+ steps, and debugging becomes nearly impossible. You can’t tell whether the reasoning failed, the tool call failed, or the output format failed – because everything is entangled in one chain.

The architecture that actually holds up is an orchestrator + specialist model: one coordinator agent that holds the goal and delegates to focused sub-agents – one for data retrieval, one for reasoning, one for system writes, one for human escalation. This multi-agent approach is quickly becoming one of the defining IT trends in enterprise software architecture – a shift away from monolithic automation toward modular, delegated systems. Because multi-agent architectures achieve 45% faster problem resolution and 60% more accurate outcomes vs. single-agent systems.

When we rebuilt a logistics client’s stalled single-agent deployment into a four-specialist structure using model orchestration – routing each sub-task to the model best suited for it – average processing time dropped by 40% and error rates became isolatable for the first time. The orchestration design didn’t change what the system did. It changed whether anyone could maintain it.

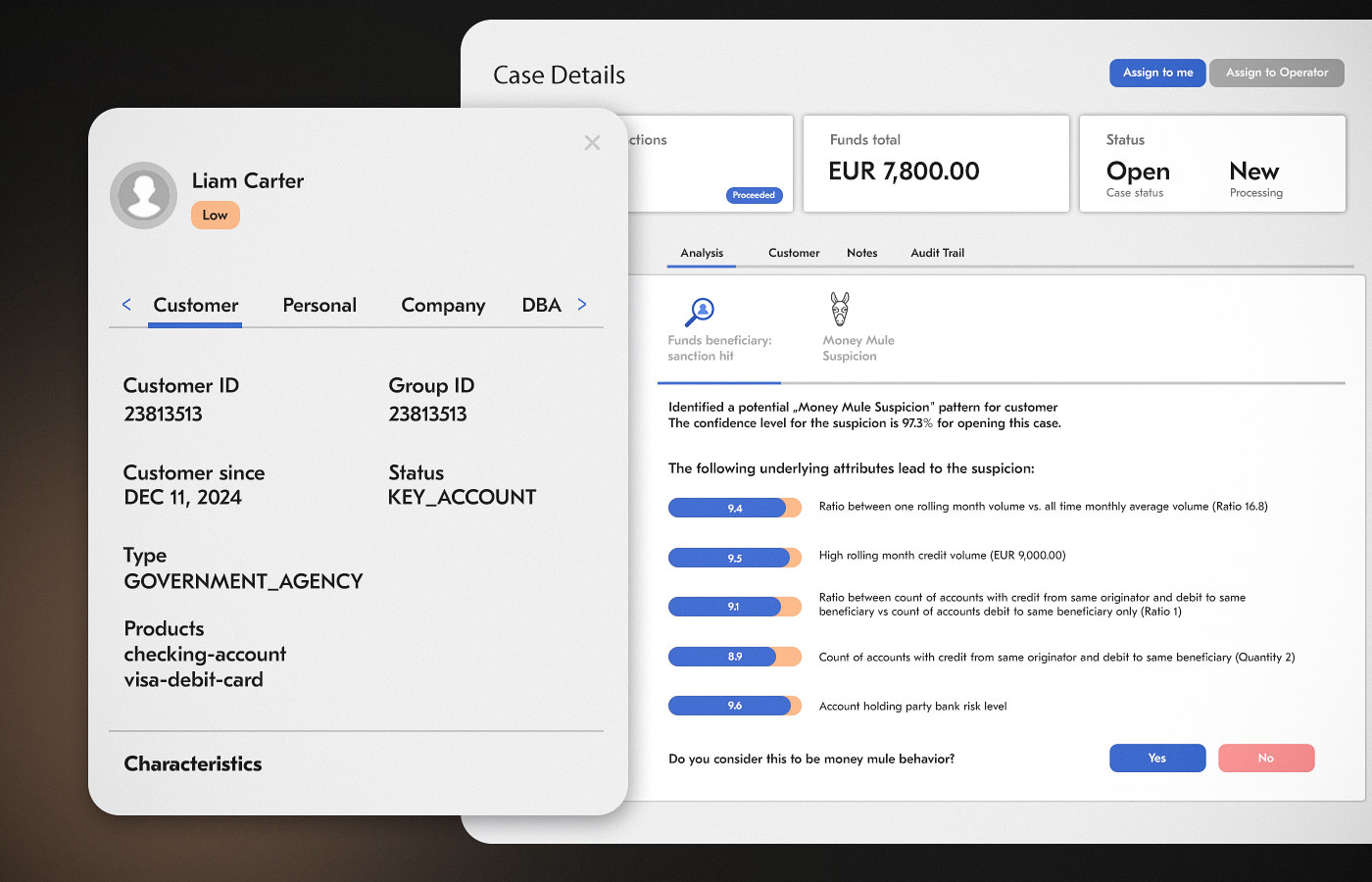

Problem #4: Governance is Added Last – and Then It Kills the Project

Here’s the scenario. The pilot succeeds. You go to scale. Security and compliance teams review it. They find no audit logs, no defined permission scopes, no rollback mechanism, and no documented accountability for when the AI agent makes a wrong call. The deployment gets blocked. You now have to rebuild from scratch.

Among the artificial intelligence trends gaining traction in regulated industries, governance-first architecture is no longer optional – it’s the baseline expectation from compliance and legal teams before any deployment reaches production.

We’ve been in that conversation on the client side. A European financial services firm came to us after exactly this outcome: six months of development, a working agent, blocked at the security review stage because there was no way to produce a complete decision trail for regulators. The rebuild took three months.

The lesson we took from it: every agent deployment now starts with a governance specification before the first line of agent code is written. Role-based access controls, decision logging, escalation thresholds, human checkpoints.

What the Latest AI Technology Changes This Picture

The latest AI technology entering production in 2026 – standardized tool protocols like MCP and A2A, mature orchestration frameworks like LangGraph and AutoGen, and governance tooling that didn’t exist 18 months ago – doesn’t eliminate these four problems. But it does make them more solvable than they’ve ever been.

Understanding the latest trends in AI means recognizing that the capability gap between what models can do and what enterprises can safely deploy has narrowed significantly. The remaining gap is almost entirely architectural and organizational. The new AI technology arriving in 2026 is mostly infrastructure: better AI connectors, better observability, better multi-model routing. These are the tools that close the pilot-to-production gap – if the organization is prepared to use them correctly.

2026 Agentic AI Adoption Trends in Enterprise: Where It’s Actually Working and Why

Rather than listing which industries are “adopting agentic AI” – the more useful question is: which workflow patterns are generating real ROI across industries? Because the same patterns keep appearing in finance, healthcare, hospitality, and logistics.

High-Volume Document Processing

This is the highest-success category in 2026. If your organization processes thousands of invoices, contracts, claims, or intake forms repeatedly – with consistent rules but variable formats – an AI agent will outperform both your human team and RPA, by a wide margin.

One fintech client came to us with a specific challenge: their legal team was reviewing every contract clause by hand before signing, which meant deals that should close in days were taking weeks. External legal costs were rising and the team was becoming a growth constraint. The question wasn’t whether to automate – it was how to do it in a way that the legal team would actually trust.

What Acropolium built was an AI contract management system where the AI agent handled extraction and clause flagging autonomously – surfacing risk, categorizing clauses, flagging deviations from standard terms – and a human reviewed every flagged item before any action was taken.

The legal team’s workload for contract review dropped by 75%. More importantly, their trust in the system increased over time as they saw what the agent caught and what it passed. That progression – from skepticism to reliance – is what production adoption actually looks like.

Tier-1 Customer Support Resolution

Customer support has the furthest-along agentic AI adoption in 2026, because the economics are immediate and measurable: cost per interaction, resolution time, escalation rate. Robinhood scaled its AI-driven customer service from 500 million to 5 billion tokens processed daily while cutting operational costs by 80%. That’s not a productivity gain – it’s a structural cost reduction.

What makes customer support tractable for AI agents: the intent space is bounded (there are only so many reasons customers contact you), outcomes are measurable in real time, and a well-designed escalation path keeps the cost of a wrong answer manageable.

When an Italian hotel group approached us about their guest services operation – staff were fielding the same requests on loop, response times were inconsistent, and the experience varied completely depending on who picked up – the problem wasn’t staffing. It was that every interaction started from zero.

The AI agent Acropolium built handled guest requests, reservation queries, and service coordination autonomously across channels, with instant escalation to staff for anything outside its confidence threshold.

Guest satisfaction scores improved by 15% and operational overhead dropped by 30%. The bigger shift: staff stopped spending time on routine requests and started having the guest conversations that actually require a person.

Healthcare Admin – Documentation, Not Diagnosis

Healthcare agentic AI is advancing specifically in documentation and administration tasks. AtlantiCare’s Clinical AI Agent reduced documentation time by 42%, saving 66 minutes per physician per day, with an 80% adoption rate. Why this worked where others failed: the agent only handled documentation. It never touched clinical decision-making. The scope was narrow, the value was obvious, and the compliance exposure was minimal.

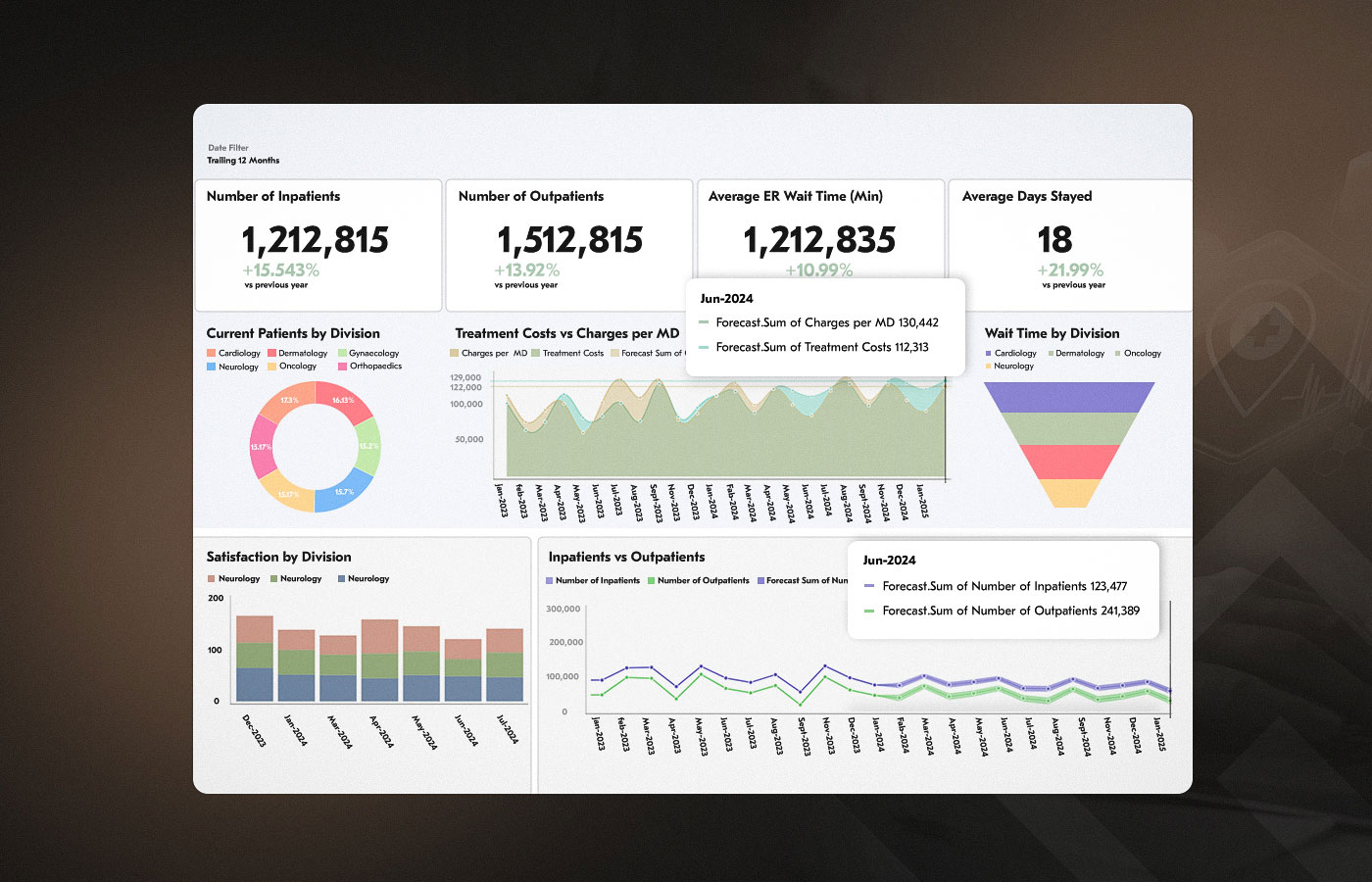

We applied the same principle for our healthcare client, a fast-growing hospital network focused on improving patient care through smart tech solutions, dealing with a different version of the same problem: resource planning was reactive, demand forecasting was done manually by department heads on spreadsheets, and the result was chronic over- and understaffing on a rotating basis. What they needed wasn’t an agent that made scheduling decisions – clinical staff correctly rejected that. What they needed was an AI agent that processed historical patterns and surfaced demand forecasts that a human planner could act on.

Acropolium built a predictive analytics system that kept the agent strictly in the analysis layer, HIPAA and GDPR compliant, with scheduling authority remaining entirely with the clinical team.

Adoption followed naturally, because the AI agent was making the human’s job easier without trying to replace their judgment.

Compliance Reporting in Regulated Industries

European enterprises under GDPR and the EU AI Act have found compliance reporting to be a particularly strong agentic AI use case – partly because the explainability requirements of good governance architecture map directly onto what agents produce anyway: every decision logged, every source cited, every exception documented. The audit trail isn’t a compliance add-on. It’s just how the system works.

For a financial services client in a heavily regulated environment, the compliance team’s problem wasn’t knowledge – it was assembly time. Analysts spent days pulling data from multiple systems, reconciling formats, and generating reports that regulators then scrutinized line by line.

The AI agent Acropolium built, as a part of a comprehensive AI-powered anti-money laundering solution, handled data retrieval, reconciliation, and report generation – reducing reporting time by 20% and improving fraud detection accuracy by 45% through pattern analysis that human analysts didn’t have capacity to run. A compliance officer reviewed every report before submission.

The AI agent didn’t replace the judgment – it replaced the assembly work that was consuming the time needed for judgment.

The Agentic AI Trends Worth Paying Attention to in 2026

The future trends in agentic AI applications that matter in 2026 aren’t about new model capabilities. They’re about architecture patterns that are becoming standard. Here’s what’s actually changing:

Multi-Agent Collaboration: From Single Agents to Agent Networks

The dominant pattern emerging in production deployments is what Salesforce calls the “orchestrated workforce” model: a primary coordinator AI agent delegates tasks to specialist AI agents, each optimized for a specific function. One agent retrieves data. Another reasons about it. Another writes to your CRM. Another handles the email.

This mirrors how functional teams work – and it solves the reliability problem that kills single-agent systems. Organizations using multi-agent architectures see 45% faster problem resolution and 60% more accurate outcomes. Microsoft’s Copilot Studio uses dynamic, context-aware delegation rather than static routing rules – the coordinator AI agent reasons about who should handle each sub-task based on what’s happening, not a predefined map.

The practical implication: the first architecture question to answer in any AI agent development project isn’t “what should the AI agent do” – it’s “which steps can a specialist AI agent handle autonomously, and which transitions need a human in the loop.” That boundary is what determines both reliability and your compliance posture. Getting it wrong in the software architecture is much cheaper than getting it wrong in production.

Model Orchestration and Multi-Model Systems

Smart teams in 2026 are routing different sub-tasks to different models: a fast, cheap model for classification; a stronger reasoning model for exception handling; a fine-tuned domain model for regulated output formats. This multi-model AI integration approach cuts costs significantly – while improving accuracy, because each model is doing what it’s actually good at.

Both IBM’s watsonx and Salesforce’s Agentforce 360 are built LLM-agnostic: they route across OpenAI, Anthropic, Mistral, and custom models without locking you into one provider. Vendor independence at the model layer isn’t a nice-to-have – it’s a strategic requirement. An architecture that depends on a single provider is one vendor decision away from an expensive rebuild.

The multi-model routing architecture we design for our clients at Acropolium builds that flexibility in from the start, before there’s pressure to cut corners to ship faster.

RAG Before Fine-Tuning – Know When to Do Each

Most enterprise agents don’t need generative AI fine-tuning. They need Retrieval-Augmented Generation (RAG): connect your agent to a live, indexed knowledge base to make it able to answer questions about your policies, products, and processes without retraining. This is faster, cheaper, and easier to update when things change.

Fine-tuning is the right call in three situations:

your AI agent needs to consistently produce output in a specific regulated format (clinical notes, legal filings, financial reports);

your vocabulary or domain logic is specialized enough that general LLM models produce inconsistent results; or

your data residency obligations prevent sending proprietary content to a cloud inference endpoint.

At Acropolium, the mistake we see most often is clients fine-tuning when RAG would have been sufficient – spending months on training runs for problems that could have been solved with a well-structured knowledge base. The right approach to LLM customization starts with that decision, not a model selection. Getting it wrong early costs months of rework.

Future Technology Trends and What They Mean for Your Deployment

The future technology trends worth watching aren’t the ones on the frontier – inter-enterprise agent networks, autonomous negotiation between AI systems, fully self-improving architectures. Those are real trajectories, but they’re not 2026 problems for most enterprises.

The new artificial intelligence technology that matters right now is the kind that makes current deployments more reliable: multi-model routing that cuts inference costs without sacrificing accuracy; RAG pipelines that keep agent knowledge current without retraining; governance layers that produce the audit trails regulators actually require.

The companies pulling ahead are the ones treating these not as experimental capabilities but as production requirements – and building accordingly.

How to Select the Right Workflows for Agentic AI

One of the most reliable failure patterns is picking the wrong starting point. “Let’s automate everything” is not a strategy. Neither is “let’s start with our most complex process because the payoff is biggest.” The workflows with the highest production success rates share four characteristics – and when one is missing, it’s usually the reason the deployment stalled.

| What to look for | Why it matters | Examples |

|---|---|---|

| High volume + repetition | ROI compounds quickly; the optimization cost is amortized over thousands of executions | Invoice processing, customer triage, report generation |

| Measurable outcome | You can measure whether the agent is working without ambiguity | Data extraction accuracy, resolution rate, cycle time |

| Bounded decision space | Fewer edge cases means fewer failure modes; escalation path is well-defined | Tier-1 support, document classification, compliance checks |

| Recoverable errors | When the agent gets it wrong, the cost is time — not legal exposure or patient safety | Internal reports, scheduling, drafting (with human review) |

A simple practical test: Does your team perform this workflow more than 500 times a month? Does it have consistent inputs? Can the output be reviewed before it affects anything external? If yes to all three, you have a viable first deployment candidate.

A good first deployment blueprint: Pick one process that meets all four criteria above. Run a 90-day pilot with full logging. Treat every error as architecture data, not a failure. The patterns you’ll observe in those 90 days will tell you more about your production architecture requirements than any vendor demo or RFP process.

Avoid starting with: clinical decision-making, legal analysis requiring judgment, financial advice, or any process where a single wrong agent decision has serious downstream consequences. These are legitimate long-term targets. They’re bad first deployments.

What a Production-Ready AI Architecture Actually Looks Like

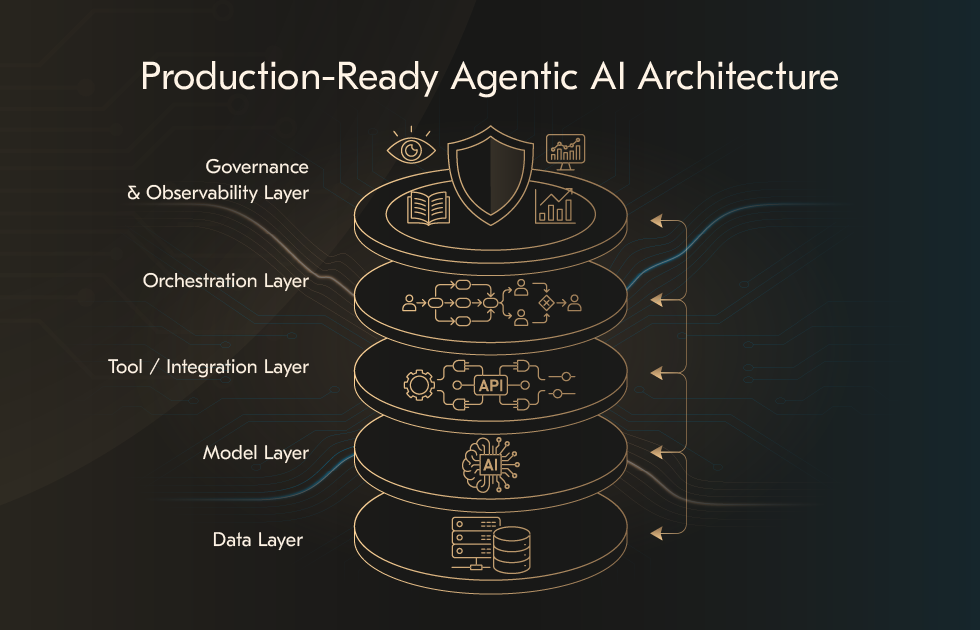

Every organization that successfully deployed an agentic AI system in production built five distinct layers – and didn’t skip any of them. Skip one, and the others become less effective.

Layer 1: Data

Clean. Structured. Real-time accessible. With clear permission controls. Not batch exports to a shared drive. Not three-year-old PDFs. Not 5 different schemas for the same customer record across 4 systems. This is not an AI problem – it’s a data engineering problem. Fix it before you build any AI agents.

Layer 2: Models

One or more foundation models, selected per task type. Increasingly, this means a multi-model AI routing layer rather than a single provider. Build vendor-agnostic from the start – lock-in at the model layer is expensive to undo.

Layer 3: Tool Layer / Integration

The connectors that let AI agents actually act – not just read. This layer handles OAuth, schema normalization, rate limiting, retry logic, and error recovery. Without it, your AI agent can understand what needs to happen but can’t do anything about it. It’s the most underestimated layer in most agentic AI architecture plans, and the most common reason production deployments fail.

Layer 4: Orchestration

The control plane managing how AI agents coordinate: which sub-task goes to which agent, when to parallelize, when to wait, when to escalate. LangGraph, AutoGen, and IBM watsonx all operate here. This is where model orchestration frameworks do their work. Get this layer wrong and AI agents block each other, duplicate work, or lose state between steps.

Layer 5: Governance and Observability

Every action logged. Every decision traceable. Escalation paths defined for every failure mode. Permissions scoped to the minimum required. This layer is not optional in any regulated industry. And it’s not optional in any organization that cares about what happens the first time the AI agent gets something wrong – which it will, eventually.

For the financial model behind this architecture – how to project TCO, expected ROI, and realistic payback timelines before you commit a budget – see our analysis of AI agent unit economics: TCO, ROI, and payback.

What These Agentic AI Future Trends Means for Your Business

The short version of the modern artificial intelligence trends: the technology works, the economic case is solid, and the main risk is now in execution – specifically, the gap between a working pilot and a production system that operations can rely on.

The companies pulling ahead right now didn’t have the most ambitious AI roadmaps. They picked one workflow, built it production-grade with proper integration and governance, collected 90 days of real performance data, and used that to justify the next deployment.

Incremental, governed, deeply integrated – that’s the pattern that scales. It’s also, incidentally, the pattern that builds internal confidence. Every team we’ve worked with that went slowly on the first deployment went faster on the second, because they stopped second-guessing the foundation.

If your organization has a high-volume workflow that involves document processing, customer triage, compliance reporting, or cross-system data reconciliation, you have a viable first deployment candidate right now. The question is not whether to start – it’s whether you have the right architecture, the right integration layer, and the right governance model to get it to production.

Acropolium’s AI agent development service covers the full journey from workflow selection and architecture design to integration, testing under real-world failure conditions, and governance framework implementation. Our highly experienced teams have deployed AI-powered systems in healthcare, fintech, hospitality, logistics, and other industries – with a focus on getting to production, not just getting to a demo. If you’re looking for a broader perspective on AI transformation economics, our multi-model AI integration and LLM customization services address the model layer decisions that most enterprises get wrong.

Have a workflow in mind but not sure if it’s the right starting point? Our engineers run a free 60-minute technical discovery session: we’ll map your candidate workflow against the four criteria above, identify your integration dependencies, and give you an honest assessment of production readiness. No sales deck. No commitment. Just a working plan.

![How to Integrate AI into Your Business: [A Comprehensive Guide]](/img/articles/how-to-integrate-ai-into-your-business-a-comprehensive-guide/img01.jpg)